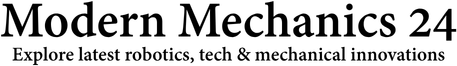

A research team from the Korea Advanced Institute of Science and Technology (KAIST) has now developed a quadrupedal robot control technology that allows robots to see their surroundings, judge obstacles, and adjust their movement in real time.

The new system, called DreamWaQ++, enables a robot to walk while actively understanding its surroundings.

Instead of reacting only after touching an obstacle, the robot can detect hazards in advance and decide how to move, much like animals carefully examining the ground before taking a step.

The work was led by Professor Hyun Myung from the School of Electrical Engineering at KAIST. The research team worked with the lab’s startup, EuRoboTics Co., Ltd. The study has been published in the journal IEEE Transactions on Robotics.

READ ALSO: China Develops Open-Source Control That Solves Bamboo Drone Vibration Problem

The KAIST team had earlier created a robot control system called DreamWaQ, which allowed quadrupedal robots to walk even without visual information. That system relied on proprioceptive sensing, which means the robot estimates terrain using internal sensors such as joint encoders and inertial measurement units.

This approach enabled robots to navigate environments where cameras or visual sensors might fail, such as dusty disaster sites or dark areas. The robot could maintain stable walking even when it could not see the ground clearly.

However, the earlier system had an important limitation. The robot could only adjust its movement after its feet touched an obstacle. In other words, it reacted to the terrain rather than predicting it. DreamWaQ++ solves this problem.

The new technology combines internal sensing with external perception sensors such as cameras and LiDAR. With these tools, the robot can scan the environment, identify obstacles in advance, and adjust its walking strategy before taking a step.

WATCH ALSO: Two humanoid robots hold unscripted conversation for hours

This creates what researchers call perception-based locomotion. The robot does not simply react. It observes, understands, and then decides how to move.

To make this possible, the research team built a multimodal reinforcement learning architecture. This system allows the robot to process information from multiple sensors and make decisions instantly while walking.

The algorithm is also designed to remain stable if one sensor fails. If the robot detects an error in a camera or LiDAR signal, it automatically switches to another sensing method to ensure safe, stable movement.

The architecture is lightweight, meaning it can run in real time without requiring heavy computing power. The design also allows the technology to be used on different robot platforms, including wheeled-leg robots and humanoid robots in the future.

KAIST Robot Tested On Stairs, Slopes

The researchers tested DreamWaQ++ in several challenging environments to measure its performance.

In stair-climbing experiments, the robot completed a course of 50 steps covering 30.03 meters horizontally and 7.38 meters vertically. It finished the entire course in just 35 seconds. This performance was faster than both blind locomotion controllers and commercial perception-based controllers.

The robot was also tested on steep slopes. It successfully climbed a 35-degree incline, even though it had only been trained on 10-degree slopes.

READ ALSO: Butterfly-Inspired Lattice Resists Impact and Absorbs Energy for Aerospace, Earthquake Safety

While climbing, the robot automatically adjusted its posture. This reduced the torque required by the rear leg motors by about 1.5 times compared with earlier methods.

In complex obstacle environments, the robot showed another important ability. It could choose efficient paths on its own, without relying on pre-programmed route planning.

When the robot encountered uncertain terrain, such as a sudden drop, it displayed exploratory behavior. It paused, examined the ground, and then decided whether to proceed. This behavior closely resembles how animals cautiously inspect unfamiliar surfaces.

The robot also showed impressive agility. During experiments, it successfully climbed over obstacles 41 centimeters high, which is taller than the robot’s own body height. It managed this while carrying a 2.5-kilogram payload.

The researchers also tested the system in simulations using other quadrupedal robots. With ANYmal-C, a well-known robot developed at ETH Zurich, DreamWaQ++ handled obstacles up to 1 meter high.

In simulations using KAIST HOUND, a quadrupedal robot developed by Professor Hae-Won Park’s group at KAIST, the system crossed obstacles as high as 1.5 meters.

The robot had only been trained on obstacles about 27 centimeters high. Yet it achieved a success rate of around 80 percent when climbing stairs up to 42 centimeters high.

This shows that the robot is not simply repeating what it learned during training. Instead, it can adapt to new environments and solve problems on its own.

WATCH ALSO: China’s military showcases modern battlefield capabilities in new exercise

The researchers believe this technology can be extremely useful in environments where traditional wheeled robots struggle to operate. Possible applications include disaster response operations, industrial facility inspection, forestry monitoring, and agricultural work in rough terrain.

Professor Hyun Myung said the research marks an important step toward smarter mobile robots.

“This research shows that robots have moved beyond simply walking,” he said. “They now understand their environment and make their own decisions while moving.”

He added that the team plans to expand this technology further so it can power intelligent mobility systems for real-world environments across many industries.