Robots excel at handling simple shapes like boxes but struggle with irregular or curved objects like bananas or cups, limiting their effectiveness in real-world tasks.

Now, researchers from the Swiss Federal Institute of Technology Lausanne (EPFL) and the Idiap Research Institute say they have found a smarter way forward. Their new system enables robots to understand and follow the natural curves of objects, making it easier to handle items such as fruits, tools, and kitchenware. The team published their findings in Science Robotics.

For humans, everyday actions like peeling a potato or slicing a cucumber feel automatic. We adjust our grip and movement without thinking. Robots, however, rely heavily on pre-programmed instructions.

That works well when objects have regular shapes. A box, for example, has flat surfaces and predictable edges. But real-world objects are rarely that simple. Fruits, cups, and tools vary in size, shape, and surface. Even small differences can throw off a robot’s movements.

READ ALSO: US Navy’s Long-Stalled 6th-Gen F/A-XX Stealth Fighter Heads Toward August Call

Because of this, engineers often have to program robots separately for each object or shape. That makes systems less flexible and harder to scale.

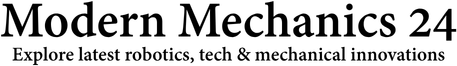

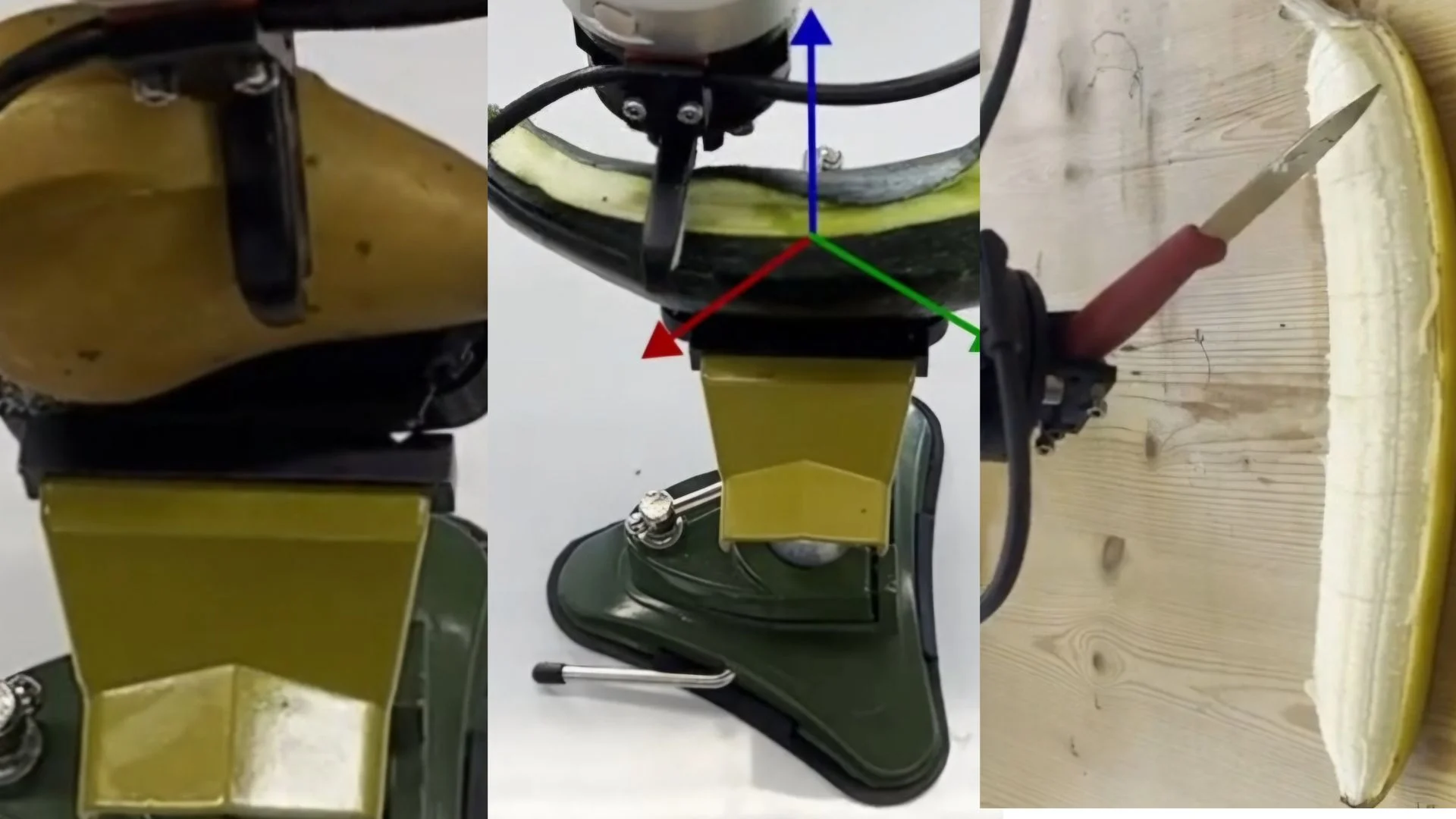

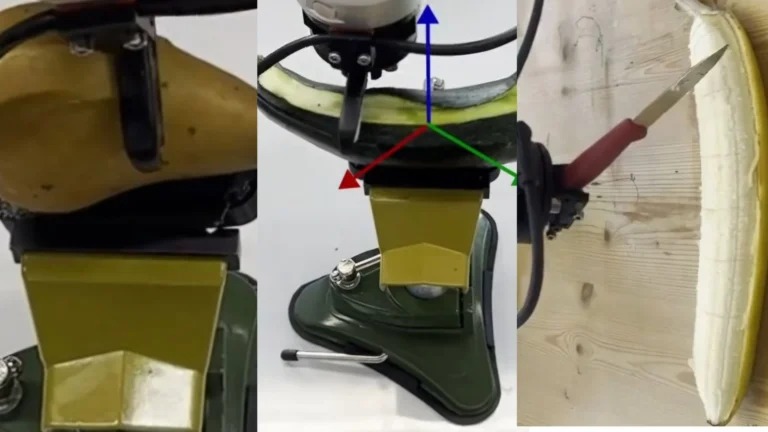

The new approach focuses on an object’s geometry, structure, and curves. Instead of following fixed paths, the robot learns to understand how an object is shaped and how to move along it.

The system starts with a stereo camera. This camera captures a detailed 3D view of an object. From that, the system builds a cloud of points, essentially a digital map of the object’s surface.

This map guides the robot’s movements. At every point, the robot knows which direction to follow based on the object’s curves.

The key innovation lies in how the robot uses this information. Once it learns a task on one object, it can transfer that knowledge to another object with a different shape.

WATCH ALSO: Rolls-Royce tests world’s first high-speed marine engine powered by methanol

The researchers explain, “Our representation enables task-transfer across shapes addressing the immense shape variation of everyday objects.”

In testing, the robot handled tasks such as peeling, slicing, and cleaning objects it had never seen before. Even when the 3D data from the camera was incomplete or noisy, the system still worked. A built-in mathematical process smooths out errors and fills gaps, keeping the robot functioning.

The team highlights this strength in their study, “We developed a computationally efficient and robust method for computing these local frames online, even in the presence of partial and noisy sensor data.”

This means the robot does not need perfect information to succeed, a big step toward real-world use.

Despite the progress, the system is not fully autonomous yet. Right now, it depends on a few key points on each object. These points often need to be labeled manually before the robot begins its task.

The researchers want to remove this step in future versions. Automating it would make the system faster and easier to deploy.

READ ALSO: MQ-4C Triton Vanishes Mid-Flight After 50,000 Ft Drop, US Navy Confirms Crash

Another challenge lies in handling soft objects. Items like sponges or flexible materials change shape when touched. The current system works best with solid objects, so adapting it to softer materials is the next goal.

This research moves robots closer to working smoothly in human environments. Instead of relying on rigid instructions, machines are starting to adapt to the messy, varied world we live in.

If further improved, this technology could play a role in kitchens, factories, and even healthcare settings, wherever objects don’t follow perfect shapes. However, one thing is clear that teaching robots to understand curves may be the key to making them truly useful in everyday life.