NVIDIA has unveiled the Vera Rubin POD, a new AI supercomputer built for the age of agentic AI.

The platform combines five different rack-scale systems into a single, cohesive machine. It is designed to address the growing problem of AI systems generating trillions of tokens as they interact with one another.

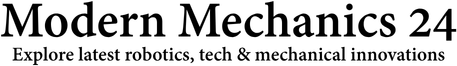

NVIDIA introduced the Vera Rubin POD, a massive AI computing platform. It is not a single computer but a collection of 40 racks working together as a single system. The system includes nearly 20,000 individual chips and 1,152 GPUs.

READ ALSO: China’s Industrial Robot Surge Hits 31%: What’s Driving This Sudden Factory Shift?

Chipmaker NVIDIA built this system to meet the demands of modern AI. The platform is built on the company’s third-generation MGX rack architecture. It is the result of co-designing seven different chips for computing, networking, and storage.

Today’s AI is moving from humans talking to chatbots to AI agents talking to each other. This “agentic AI” generates large numbers of reasoning tokens and requires constant, low-latency communication. Older systems struggle to handle this new, complex workflow of planning, tool use, and code execution.

WATCH ALSO: China’s military showcases modern battlefield capabilities in new exercise

The POD uses five specialized rack systems, each with a specific job. One rack, the Vera Rubin NVL72, serves as the main computing engine, with 72 GPUs working as a single giant processor. Another rack, the Groq 3 LPX, handles ultra-fast inference, while a third rack of Vera CPUs provides sandboxed environments for AI agents to test and validate their work.

This design allows AI factories to run much more efficiently. NVIDIA claims the new system delivers up to 10x better inference performance per watt compared to its previous Blackwell platform. It also significantly reduces the cost per token, potentially lowering operating costs for companies running large-scale AI models.

READ ALSO: Artemis II Back on Pad: What NASA Fixed Before Historic Moon Flight

The system is designed for large-scale data centers with advanced liquid cooling. It requires a specific infrastructure, using 45°C warm water for cooling to maximize energy efficiency. While it is in full production, it is scheduled to ship in the second half of 2026.

The Vera Rubin POD shows that AI infrastructure is moving beyond individual chips to entire buildings functioning as a single computer. By unifying computing, networking, and storage, NVIDIA aims to provide the backbone for the next generation of AI applications. This approach is meant to power the world’s most energy- and cost-efficient data centers.