Researchers at the Massachusetts Institute of Technology (MIT) are changing how artificial intelligence is judged. Instead of focusing only on efficiency, they are now emphasizing fairness in AI decision-making.

Artificial intelligence is steadily becoming a silent decision-maker in many parts of modern life. From managing power grids to routing traffic, these systems are designed to find the most efficient solutions. They reduce costs, improve performance, and handle complex calculations faster than humans ever could.

However, a deeper question remains: are these decisions fair? To address this, researchers at MIT have developed a new approach. Rather than focusing only on what AI systems achieve, their work examines who benefits and who might be left behind.

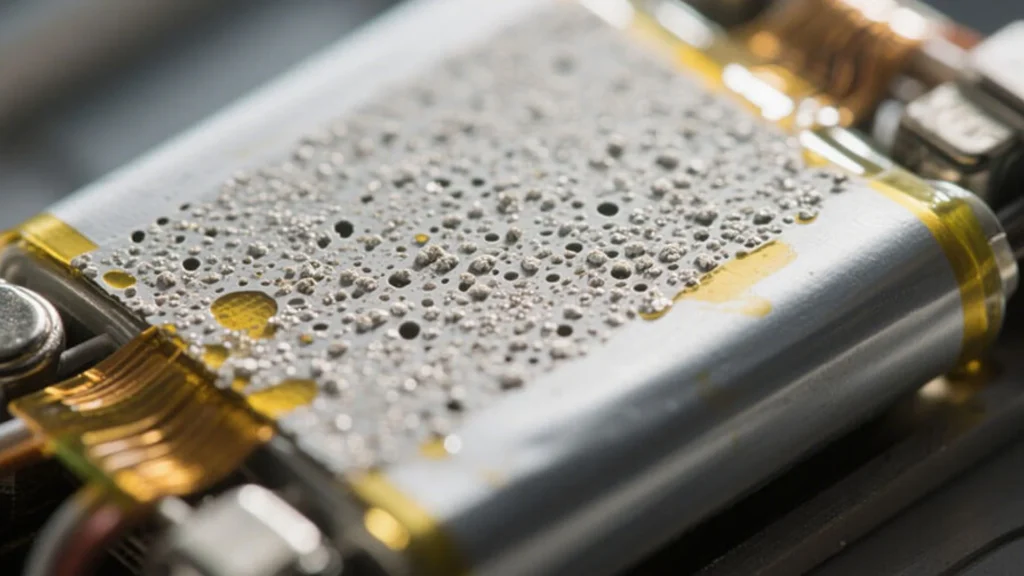

In many real-world situations, the best solution is not always the fairest one. Take the example of a power distribution system. An AI model might identify a strategy that minimizes costs and keeps electricity stable.

On paper, that looks like success. But what if that same strategy results in more frequent outages in poorer neighborhoods, while wealthier areas remain unaffected? This is the kind of hidden imbalance the MIT team set out to uncover.

READ ALSO: Durham University Introduces Alan Humanoid Robot for AI and Assistive Research

Led by Chuchu Fan, an associate professor at MIT, the researchers developed a new testing framework to identify ethical risks before AI systems are deployed in the real world.

“We can add rules and guardrails into AI systems,” Fan explains. “But those safeguards only prevent problems we already imagine. We need a way to discover the risks we don’t see coming.”

Her statement reflects a growing concern in the AI community. Many current systems are trained on large datasets and optimized for performance. But fairness, justice, and human values are not always easy to measure or encode into algorithms. That is where the new framework steps in.

MIT’s Different Way to Test AI

The MIT team developed a system called Scalable Experimental Design for System-level Ethical Testing(SEED-SET). The idea behind SEED-SET is simple but powerful. Instead of relying solely on fixed rules or past data, it actively searches for situations where AI decisions might fail to meet ethical standards.

Most existing testing methods depend on pre-labeled data. But ethical judgments, such as fairness or equality, are subjective. They change across communities, cultures, and contexts. This makes them difficult to define in strict numerical terms.

SEED-SET takes a different approach by separating the evaluation process into two parts.

WATCH ALSO: International Space Station crew wishes New Year 2026

The first part looks at objective performance. This includes measurable factors such as cost, efficiency, and reliability. The second part focuses on human values. This includes fairness, equity, and how different groups perceive the outcome. By splitting these two layers, the system can better understand where technical success clashes with ethical concerns.

How the System Works

The framework uses a hierarchical structure to evaluate decisions. First, an objective model assesses how well an AI system performs using clear metrics. For example, it may assess how efficiently electricity is distributed or how well traffic flows.

Then, a subjective model builds on that analysis. This layer evaluates how different groups feel about those outcomes. To simulate human judgment, the system uses a large language model (LLM). It acts as a proxy for human evaluators.

The researchers feed the model with prompts that describe the preferences of different stakeholders. These could include rural communities, urban users, and industrial players such as data centers. The LLM then compares scenarios and selects the one that best aligns with the stated ethical values.

Lead author Anjali Parashar explains the reasoning behind this choice. “If humans review hundreds of scenarios, they can become tired and inconsistent. Using an LLM helps maintain consistency in evaluations.”

Once a scenario is selected, the system simulates it and uses the results to guide the next round of testing. Over time, SEED-SET identifies the most meaningful scenarios, both good and problematic ones.

Finding the ‘Unknown Unknowns’

One of the biggest strengths of this framework is its ability to uncover unexpected issues. Traditional systems often test for known risks. But SEED-SET actively searches for ‘unknown unknowns’ problems that developers did not anticipate.

For example, in a power grid simulation, the system might discover that during peak demand, electricity is consistently directed toward high-income areas. Meanwhile, lower-income neighborhoods face higher risks of outages.

READ ALSO: US Marines Test MADIS Air Defense System to Stop Drone Threats

These patterns might not be obvious in standard testing. But SEED-SET highlights them clearly.

“We don’t want to waste resources on random evaluations,” says researcher Yingke Li. “We want to focus on the scenarios that matter most.”

This targeted approach makes the process more efficient. It also ensures that critical ethical issues are not overlooked.

Adapting to Real-World Complexity

Modern systems often serve multiple groups with different priorities. In a power grid, for instance, a rural community might value reliability above all else. A data center, on the other hand, might prioritize uninterrupted supply, even at higher costs.

Balancing these competing interests is not easy. SEED-SET allows users to define these preferences in flexible ways. It does not require pre-existing datasets or rigid rules. As user preferences change, the system adapts.

During testing, the researchers observed that even small changes in stakeholder priorities led to very different outcomes. This shows that the framework is sensitive to human values.

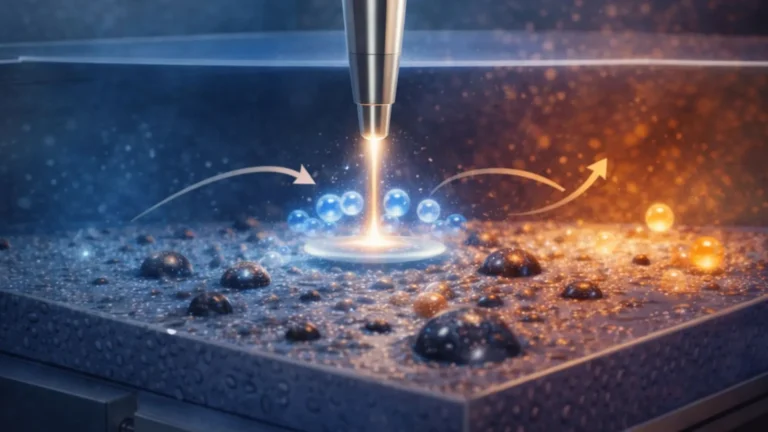

The MIT team applied SEED-SET to several real-world scenarios. These included an AI-driven power grid and an urban traffic routing system.

In both cases, the framework generated more than twice as many useful test scenarios as traditional methods within the same amount of time. More importantly, it uncovered issues that other approaches missed. This suggests that current evaluation methods may not be enough to ensure ethical AI deployment.

WATCH ALSO: China commissions world’s largest open-sea offshore solar photovoltaic project

Why This Matters Now

AI systems are used in high-stakes environments. They influence decisions in energy, transportation, healthcare, and even public policy.

When these systems fail, the consequences are not just technical; they are social. Unfair decisions can deepen inequality, reduce trust, and harm vulnerable communities. That is why early detection of ethical risks is critical.

SEED-SET provides a practical way to do that. It helps developers, policymakers, and organizations understand how AI decisions impact different groups. And it gives them a chance to fix problems before they reach the real world.

The researchers believe this is just the beginning. They plan to conduct user studies to see how well the framework supports real decision-making. They also aim to scale the system to handle larger, more complex problems. This includes evaluating the ethical behavior of large language models themselves.

READ ALSO: From Moonwalk to Match Play: KAIST Humanoid v0.7 Shows Moves You Didn’t Expect

As AI systems continue to evolve, so must the tools used to test them. SEED-SET represents a shift in thinking from simply optimizing performance to actively questioning fairness. It reminds us that efficiency alone is not enough.

In a world shaped by algorithms, the true measure of success lies in how those systems treat people. Sometimes, the hardest question is not what AI can do, but what it should do. Notably, this research was funded, in part, by the US Defense Advanced Research Projects Agency.