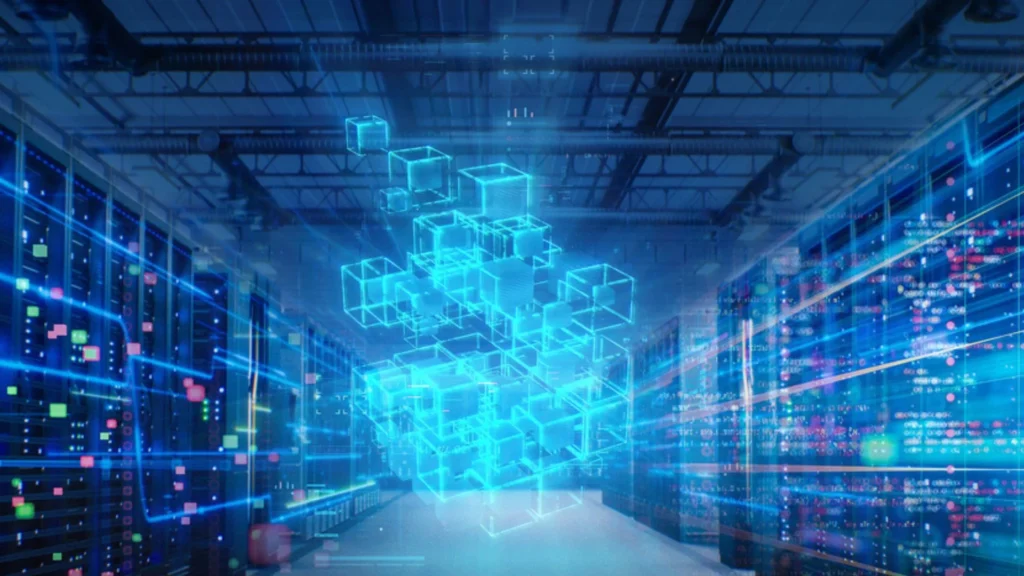

Researchers at the Massachusetts Institute of Technology have developed a new system that addresses inefficiencies in data centers in a simple yet powerful way.

Instead of adding more hardware, their approach focuses on using existing resources more intelligently. The result is a system that can significantly improve performance while reducing the need for costly upgrades.

Data centers are the backbone of modern digital services. From streaming videos to running artificial intelligence models, they depend heavily on storage devices to process and move data quickly. These storage devices, especially solid-state drives (SSDs), are designed to be fast. But in real-world conditions, they often fail to deliver their full potential.

To improve efficiency, companies usually pool multiple SSDs together over a network. This allows several applications to share storage resources. In theory, this should maximize usage. In reality, a large portion of storage capacity still goes unused. The reason lies in performance differences between devices.

READ ALSO: 5-Hour Flight: Solar Drone Uses 110W Power and 1 Smart Backup Mid-Air

Not all SSDs behave the same way. Some are older, some are newer. Some have been used heavily, while others are relatively fresh. These differences create uneven performance. And in a pooled system, even one slower device can drag down the performance of the entire group.

Recognizing this problem, MIT researchers designed a system called ‘Sandook.’ The name comes from an Urdu word meaning box, symbolizing storage. Sandook is a software-based solution that improves how workloads are distributed across SSDs.

Unlike traditional approaches that focus on fixing one issue at a time, Sandook addresses three major sources of variability together. This makes it far more effective in improving overall performance.

The first source of variability comes from hardware differences. SSDs may vary in age, capacity, and wear. These differences affect how fast they can process data.

The second issue concerns how SSDs handle reading and writing data. When new data is written, the device must first erase existing data. This can slow down read operations running concurrently. This conflict creates delays.

WATCH ALSO: Two Humanoid Bots Tackle 32-Box Job With Zero Human Help

The third factor is something called garbage collection. SSDs periodically clean up outdated data to free space. This process happens at unpredictable times and slows down performance while it runs.

Gohar Chaudhry, a graduate student at MIT and lead author of the study, explains the challenge clearly. She says, “I can’t assume all SSDs will behave the same throughout their lifecycle. Even if I assign the same workload, some will slow down and affect overall performance.”

To solve these problems, Sandook uses a two-tier system. It combines a global controller and multiple local controllers.

The global controller looks at the big picture. It decides how tasks should be distributed across all SSDs in the system. It ensures that no single device is overloaded.

At the same time, local controllers operate on each machine. They react quickly to sudden changes. If one SSD starts slowing down, the local controller immediately shifts some tasks to other devices. This combination of planning and quick reaction makes Sandook highly adaptive.

Chaudhry describes this approach simply. She says, “We plan globally but react locally. That helps us handle both long-term and short-term changes in performance.”

READ ALSO: Cyprus Seeks 180 French Armored Vehicles with EU Funding

One of Sandook’s key strategies is to rotate tasks across SSDs. Instead of allowing reads and writes to happen on the same device at the same time, it spreads them out. This reduces interference and improves speed.

The system also builds performance profiles for each SSD. These profiles help predict when garbage collection might slow down a device. When that happens, Sandook reduces the workload on that SSD and shifts tasks to other SSDs.

Chaudhry explains this approach in practical terms. She says, “If a device is busy cleaning up data, we give it less work for a while. Then we slowly increase its load again once it recovers.”

This dynamic adjustment helps maintain steady performance across the system.

Another advantage of Sandook is that it assigns workloads based on each SSD’s performance. Faster, healthier devices handle more work, while slower ones are assigned lighter tasks. This balanced approach ensures better use of all available resources.

To test their system, researchers ran Sandook on a pool of 10 SSDs. They evaluated its performance across four different tasks: running databases, training AI models, compressing images, and storing user data.

Sandook improved performance by 12-94 percent compared to traditional static systems. It also increased overall SSD utilization by 23 percent. In some cases, the system helped devices achieve up to 95 percent of their theoretical maximum performance.

What makes this even more significant is that all of this was achieved without any specialized hardware. The system runs entirely on software, making it easier to adopt in existing data centers.

Chaudhry emphasizes the importance of this approach. She says, “There is a tendency to add more hardware to solve problems, but that is not sustainable. Our system shows that we can get more value from what we already have.”

This idea has broader implications beyond performance. Data centers consume large amounts of energy and contribute to carbon emissions. Extending the life and efficiency of existing hardware can reduce environmental impact.

The research team includes Ankit Bhardwaj from Tufts University, Zhenyuan Ruan, and senior author Adam Belay from MIT’s Computer Science and Artificial Intelligence Laboratory. Their work will be presented at the USENIX Symposium on Networked Systems Design and Implementation, a major conference in computer systems.

WATCH ALSO: US firm’s new giant robot can help tackle wildfires

The project has received funding from organizations such as the National Science Foundation, the Defense Advanced Research Projects Agency, and the Semiconductor Research Corporation.

Experts outside the research team have also praised the work. Josh Fried, a software engineer at Google, highlights its importance.

He says, “Flash storage powers modern data centers, but managing it efficiently remains a challenge. This work offers a practical solution that can be used in real systems.”

Looking ahead, the researchers plan to further enhance Sandook. They want to integrate new SSD technologies that allow better control over data placement. They are also exploring ways to leverage predictable AI workloads to further improve performance.

As data demands continue to grow, solutions like Sandook offer a new direction. Instead of building bigger systems, they show how smarter software can unlock hidden potential. In a world where efficiency matters as much as scale, this shift could redefine how data centers operate in the years to come.